Why Active Parameters Matter More Than Total VRAM

Actually, I should clarify – I spent most of 2024 convinced I’d need a serious hardware upgrade just to run decent local AI models. Buying dual RTX 3090s off eBay felt like the absolute bare minimum to get anything remotely close to GPT-3.5 performance without constantly feeding my credit card to cloud providers. We were all just brute-forcing massive 70-billion parameter dense models and accepting the terrible latency as a cost of doing business locally.

But the math on hardware requirements completely flipped. And the industry pivot to Sparse Mixture of Experts (SMoE) architectures changed how I provision hardware for my local applications. Instead of lighting up every single parameter in a neural network for every single word generated, these models route queries to specific “expert” sub-networks. You might have a massive model loaded into memory, but only a fraction of it actually fires during inference.

This sounds like a neat academic trick until you actually look at your system monitors.

The benchmark that made me ditch dense models

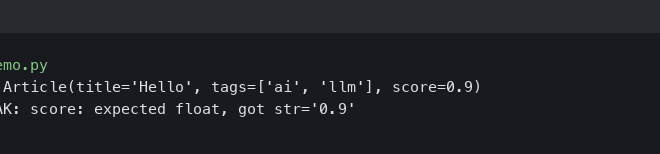

Let me give you some hard numbers. Last Tuesday, I was benchmarking a local RAG (Retrieval-Augmented Generation) pipeline on my Ubuntu 24.04 workstation. It has 64GB of system RAM and those two used RTX 3090s I mentioned earlier.

I loaded up a standard dense 70B model using vLLM 0.4.1. It barely fit across the 48GB of pooled VRAM with 4-bit quantization. The fans spun up like a jet engine. I was getting maybe 14 tokens a second. That’s readable, but when you’re passing it 4,000 tokens of context from a database query, you’re sitting there staring at a blinking cursor for way too long. It breaks your train of thought.

Then I swapped it out for an SMoE model. Total size on disk was roughly similar—about 46GB. But here’s the kicker: it only uses about 12 billion active parameters during inference.

My throughput shot up to 68 tokens per second. The GPU utilization graph in nvidia-smi looked completely different. Instead of a sustained 100% thermal-throttled nightmare, it showed rapid, spiky workloads. The latency drop is ridiculous. I’m getting output that rivals the big proprietary APIs, but it’s running entirely on hardware sitting under my desk.

The memory bandwidth trap

But there is a massive gotcha here that hardware reviewers keep ignoring.

People see “12B active parameters” and probably think they can run this on a base M2 Mac or a single 16GB gaming GPU. You can’t. I’ve watched developers waste hours trying to cram these expert models onto underpowered rigs because they don’t understand the difference between compute requirements and memory capacity.

You still have to load the entire model into VRAM. All the experts have to sit there, ready to be called upon at a millisecond’s notice. If you try to offload half of a 45GB SMoE model to your system RAM, your inference speed will absolutely tank. I tried this exact setup using llama.cpp last month. The moment the GPU had to reach across the PCIe bus to fetch weights from system memory, my 68 tokens/sec plummeted to 3 tokens/sec.

It was brutal. The OOM killer actually nuked my desktop environment at one point because I miscalculated my available RAM. That was a fun reboot.

So your hardware shopping list changes. You don’t need the absolute fastest compute cores anymore. You need VRAM capacity and memory bandwidth. A used Mac Studio with 128GB of unified memory is honestly a better buy for local AI right now than a top-tier gaming PC with a single 24GB card, purely because you can hold massive expert models entirely in fast memory.

Where the silicon is heading

But we’re looking at a weird split in the hardware market right now. Consumer GPUs are still heavily biased toward rasterization and compute, while local AI developers are probably begging for cheap, high-capacity VRAM cards.

And I expect dense models above 30B to basically disappear for local edge deployments by Q1 2027. The economics just don’t make sense anymore. Why would I burn 400 watts of power running a dense 70B model when an SMoE model gives me the same reasoning capabilities at a fraction of the compute cost?

So if you’re building a rig today, ignore the teraflops marketing. Look at the memory bus width and total VRAM. The software has figured out how to be smart about compute. Now we just need enough memory to hold all those experts at once.