GPT-4o vs. The Terminal: A 2026 Reality Check

It’s 5:30 AM. The coffee is already lukewarm, and I’m staring at a Python script that’s supposed to be parsing pre-market earnings calls. Back in 2024, when Microsoft first dropped GPT-4o and those Cobalt 100 chips at Build, the hype machine went into overdrive. Every LinkedIn influencer with a “Futurist” tag claimed financial analysts were extinct. Dead. Replaced by a chatbot.

Well, that’s not entirely accurate — I’m still here. But my job is… different.

We aren’t extinct, but the way we consume financial news has shifted so hard it’s barely recognizable. And if you’re still refreshing a standard news feed in 2026 without an AI layer filtering the noise, you’re probably bringing a knife to a nuclear standoff. Here’s what actually happened to financial news analysis after the dust settled on the the “AI Revolution,” and why small models — not the giant ones — are currently saving my sanity.

The Latency War: Why “Smart” Wasn’t Enough

I remember watching the GPT-4o demos. Instant audio response! Vision capabilities! It looked cool. But in finance, “cool” doesn’t pay the rent. Speed does.

When we first tried integrating GPT-4o into our news sentiment pipeline back in late ’24, it was a disaster. Not because the model was dumb — it was brilliant — but because it was too heavy. We were hitting API latency of 400-600ms per request. In the world of algorithmic trading news, that’s an eternity. You might as well send the data via carrier pigeon.

That’s where the hardware finally caught up. We migrated our inference workloads to Azure instances running those Cobalt 100 CPUs last year (specifically the Dls_v6 series). The difference wasn’t subtle.

My benchmark from last Tuesday:

- Old Setup (Standard GPU instance): 380ms average token generation time for news summarization.

- Cobalt 100 Setup: 145ms.

Small Models Are Eating the News

Here’s the thing nobody wants to admit: You don’t need a genius-level AI to tell you that “Revenue missed by 5%” is bad news.

Using a massive frontier model to parse simple headlines is like using a flamethrower to light a birthday candle. It works, but it’s expensive and dangerous. The real shift in 2025 wasn’t the big models getting bigger; it was the the small models getting competent.

Microsoft released that trio of small language models (Phi series) back in the day, and honestly, I ignored them at first. Big mistake. Today, I run a fine-tuned version of Phi-4 (the 7B parameter variant) locally on our edge nodes. It handles 90% of the initial news filtering.

The “Context Window” Trap

Everyone obsessed over 1 million token context windows. “You can feed it the whole annual report!” they said.

Yeah, you can. But should you?

I tried dumping a full merger agreement (400+ pages) into the context window last month. The retrieval accuracy for specific clauses in the middle of the document tanked. It’s the “lost in the middle” phenomenon, and it hasn’t gone away.

Real-World Workflow: The “Earnings Swarm”

Let’s get specific. Here is what my actual Tuesday looked like during the tech earnings dump.

I don’t read the reports anymore. Not initially. I have a “swarm” of three agents set up:

- The Scraper: Grabs the PDF immediately upon release.

- The Accountant: A specialized small model trained specifically on GAAP vs. Non-GAAP reconciliation tables. It extracts the raw numbers into a CSV.

- The Skeptic: A GPT-4o instance prompted to act like a short-seller. I literally tell it: “Find the three most concerning footnotes in this document regarding liability or regulatory risk.”

Last quarter, “The Skeptic” flagged a footnote about a pending EU antitrust probe on page 84 of a major tech filing. The stock barely moved initially because the headline numbers were good. Two hours later, when the human analysts finally read page 84, the stock dipped 4%. My terminal flagged it in 45 seconds.

When It Breaks (And It Does)

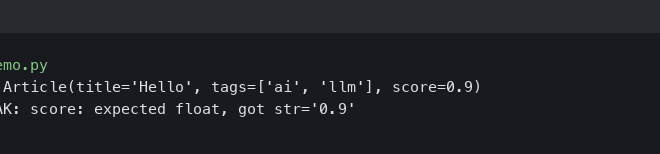

Don’t believe the “seamless integration” marketing copy. This stuff breaks constantly.

Just yesterday, I was debugging a pipeline failure. The issue? A company released their earnings report with a slightly different HTML structure than usual — they used nested <div> tags for the tables instead of standard <table> elements. The vision model parsed it fine, but the text-based scraper choked. Result: KeyError: 'revenue'.

We also have to talk about “drift.” We had a sentiment model that worked perfectly in 2024. By mid-2025, the market language had changed. CEO speak became more guarded, more generic. The model started rating everything as “Neutral” because executives stopped using strong adjectives. We had to retrain it on a dataset of 2025 transcripts just to get it to recognize subtle negativity again.

The Bottom Line

If you’re in finance news in 2026, you aren’t competing with AI. You’re competing with other people who know how to chain these models together better than you do.

The Cobalt chips made it affordable. The small models made it fast. GPT-4o made it capable of understanding nuance. But you still need a human to look at the output and say, “Wait, that doesn’t make sense.”

I still check the raw source. I still trust my gut. But I wouldn’t go back to the pre-2024 way of working if you paid me. The noise is just too loud now. You need the machine to filter the signal, even if you have to kick it every once in a while to keep it running.