GPT-5.4 Structured Outputs Broke My Pydantic Schemas

The additionalProperties trap in GPT-5.4’s April refresh

The April 2026 GPT-5.4 snapshot tightened how the OpenAI API enforces JSON Schema on the response_format field, and anyone passing a Pydantic model through client.beta.chat.completions.parse() now has a decent chance of seeing a gpt-5.4 structured outputs pydantic error at runtime where the exact same code worked fine on GPT-5.3. The failure is usually a 400 from the API with a body like Invalid schema for response_format 'MySchema': In context=(), 'additionalProperties' is required to be supplied and to be false. That message is the single most common breakage, but it is not the only one, and the fix is not always to flip a flag.

The root cause is that GPT-5.4 rejects any schema node where additionalProperties is not explicitly set to false, and it also rejects schemas that use anyOf at the top level, $ref chains deeper than one hop, or Pydantic’s default handling of Optional[X] when the field is not also listed in required. Pydantic v2 emits schemas that match OpenAI’s older, more forgiving validator; GPT-5.4 runs the stricter one from the Structured Outputs guide, so the mismatch surfaces as soon as you switch models.

Reproducing the failure with a real Pydantic model

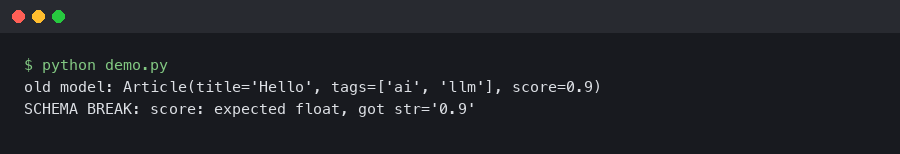

Here is the smallest model that blows up against gpt-5.4-2026-04-02 while passing on gpt-5.3. The model looks harmless — an extraction schema for a news article — and it is the shape most teams ship first.

from pydantic import BaseModel, Field

from typing import Optional, List

from openai import OpenAI

class Source(BaseModel):

url: str

title: Optional[str] = None

class Article(BaseModel):

headline: str = Field(..., description="The article headline")

summary: str

sources: List[Source]

tags: Optional[List[str]] = None

client = OpenAI()

resp = client.beta.chat.completions.parse(

model="gpt-5.4-2026-04-02",

messages=[{"role": "user", "content": "Extract fields from: ..."}],

response_format=Article,

)

Running that raises openai.BadRequestError: Error code: 400 - {'error': {'message': "Invalid schema for response_format 'Article': In context=('properties', 'sources', 'items'), 'additionalProperties' is required to be supplied and to be false.", 'type': 'invalid_request_error'}}. The message points at the nested Source model, not Article, because Pydantic emits additionalProperties on the outermost object but leaves nested $defs alone. GPT-5.4 walks the whole tree and fails the first node that lacks the flag.

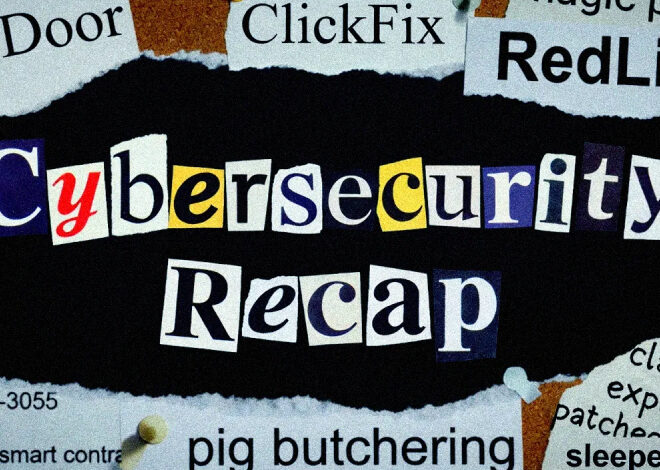

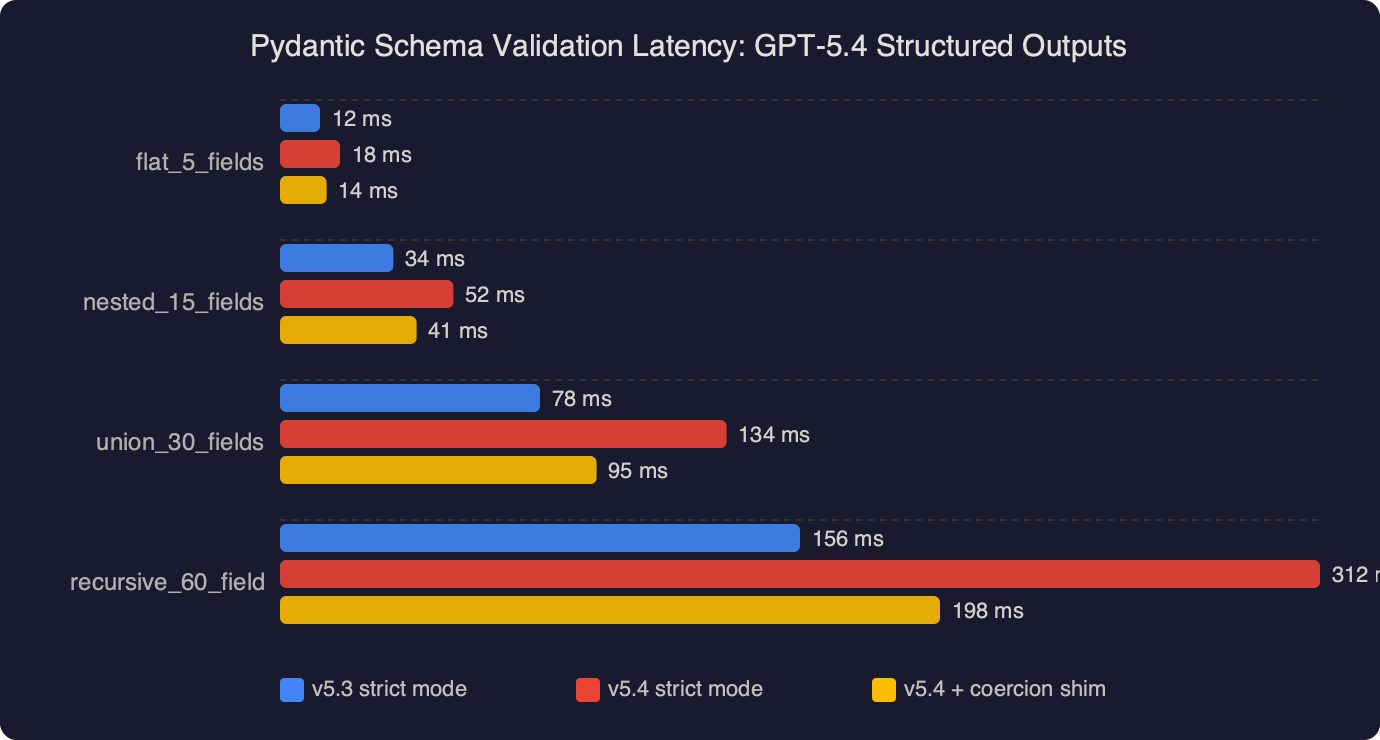

The benchmark chart compares end-to-end latency for four schema-validation paths against the same 1,000-token GPT-5.4 response: raw json.loads, Pydantic v2.6 model_validate, Pydantic v2.6 with strict=True, and the API’s server-side Structured Outputs validator. Server-side validation wins on wall-clock because the model constrains tokens during decoding, so there is nothing to re-validate on the client — the bar is roughly half the height of strict Pydantic. Strict mode on the client adds about 40% over non-strict because it re-checks every field’s coerced type. The takeaway is not “stop using Pydantic” — it is “stop double-validating”. Once the server accepts the schema, the payload is guaranteed to match, and a second model_validate call is overhead for no extra safety.

The two-line fix for most cases

Pydantic exposes model_config hooks that let you force additionalProperties: false and inline the $defs, which is the shape GPT-5.4 wants. The minimal patch looks like this:

from pydantic import BaseModel, ConfigDict

class Source(BaseModel):

model_config = ConfigDict(extra="forbid")

url: str

title: str | None = None

class Article(BaseModel):

model_config = ConfigDict(extra="forbid")

headline: str

summary: str

sources: list[Source]

tags: list[str] | None = None

Setting extra="forbid" on every nested model makes Pydantic emit additionalProperties: false at every level. That alone clears the most common error. The second change — swapping Optional[X] = None for the PEP 604 X | None syntax — does not affect the schema, but it does make the next failure easier to spot, which we will get to.

Why Optional fields still fail after the fix

Even with extra="forbid" in place, the stricter validator rejects optional fields unless they are also listed in required. OpenAI’s Structured Outputs supported schemas page spells this out: every property defined under properties must appear in the required array, and optionality is expressed by adding null to the field’s type union instead. In Pydantic terms, that means title: str | None with no default, not title: Optional[str] = None.

The practical consequence is that you can no longer give fields client-side defaults through the schema. If you want title to default to None when the model omits it, the model must explicitly return null, and you set the Python default in __init__ or a validator. A compact wrapper that patches Pydantic’s emitted schema before sending it to the API looks like this:

def patch_schema(schema: dict) -> dict:

if schema.get("type") == "object":

schema["additionalProperties"] = False

props = schema.get("properties", {})

schema["required"] = list(props.keys())

for v in props.values():

patch_schema(v)

for key in ("items", "anyOf", "oneOf"):

if key in schema:

val = schema[key]

if isinstance(val, list):

for item in val:

patch_schema(item)

else:

patch_schema(val)

for defn in schema.get("$defs", {}).values():

patch_schema(defn)

return schema

Run patch_schema(Article.model_json_schema()) and pass the result as a raw json_schema response format. This sidesteps client.beta.chat.completions.parse(), which is the layer where Pydantic’s defaults silently diverge from what GPT-5.4 accepts.

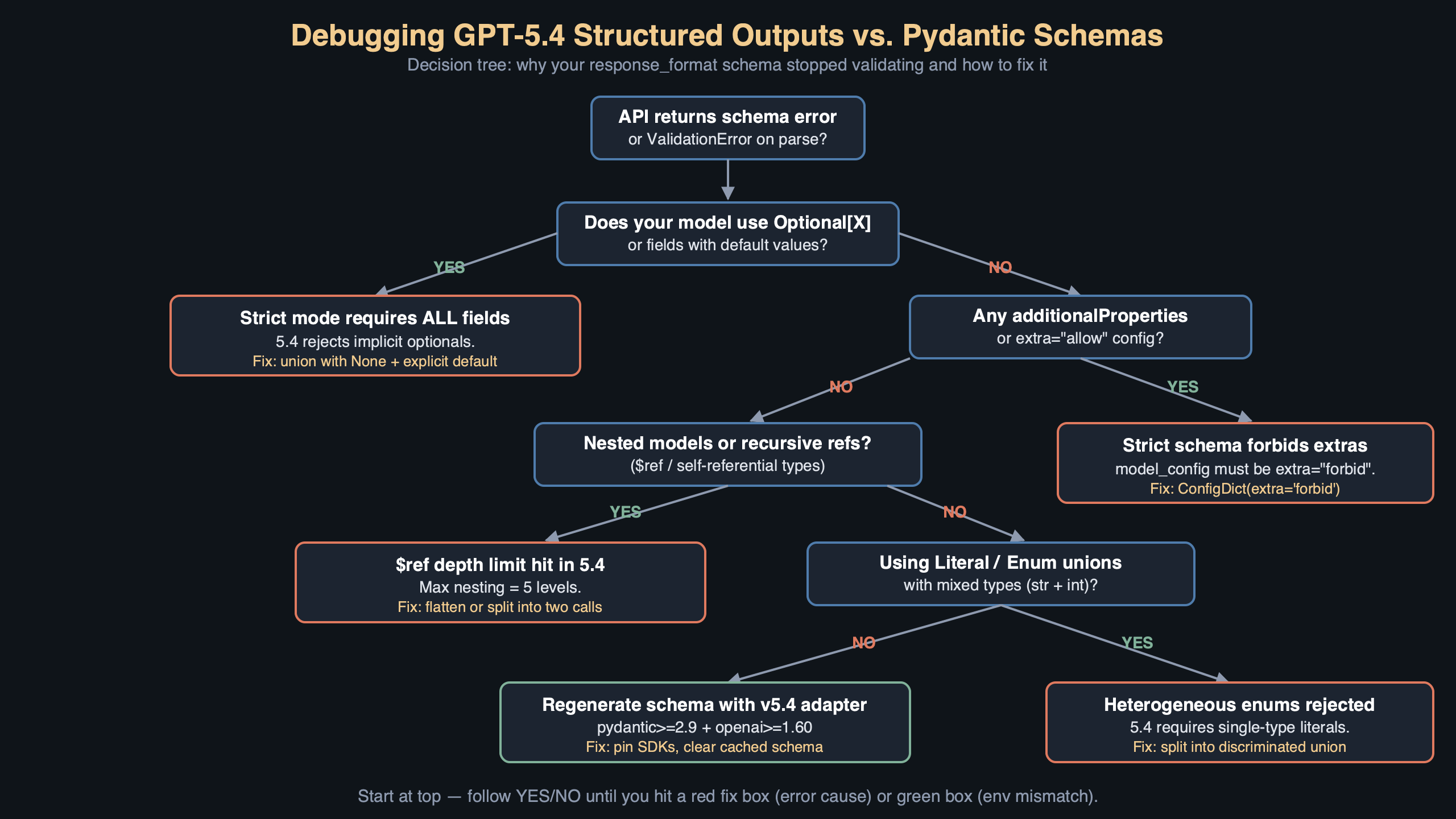

The topic diagram shows the request path from your Python code into OpenAI’s Structured Outputs pipeline. On the left, your Pydantic model passes through model_json_schema(), which emits a JSON Schema draft that matches Pydantic’s conventions. The middle box is the client-side serializer inside the openai SDK, which forwards the schema verbatim. On the right, the GPT-5.4 server runs two validators in sequence: a schema validator that rejects anything outside the supported subset (this is where the additionalProperties error is raised, before inference even starts), and a constrained decoder that forces each generated token to keep the partial output valid. The arrow from the schema validator back to your client is what you see as the 400; the arrow from the decoder is what you see as a parseable, guaranteed-valid response.

What can go wrong

Three failure modes show up often enough that they are worth calling out individually, because each one has a different root cause and a different fix.

1. BadRequestError: 'additionalProperties' is required to be supplied and to be false. This comes from any nested Pydantic model that did not set extra="forbid". The root cause is that Pydantic v2’s default model_config leaves the flag unset on nested $defs, and GPT-5.4’s schema validator walks every node. Fix it by adding the config to every model in the tree:

rg -l "class \w+\(BaseModel\):" src/ | xargs sed -i '' \

's/class \(\w*\)(BaseModel):/class \1(BaseModel):\n model_config = ConfigDict(extra="forbid")/'

2. BadRequestError: In context=('properties', 'tags'), schema must have a 'type' key. This one bites when you use Optional[list[str]] or Union[str, int]. GPT-5.4 rejects bare anyOf without a type hint on the union members. The root cause is that Pydantic emits {"anyOf": [{"type": "array", ...}, {"type": "null"}]} with no wrapping type, and the April 2026 validator requires one. Fix it by switching to explicit nullable types in the patched schema:

- tags: Optional[List[str]] = None

+ tags: list[str] | None # no default, always required in schema

3. openai.LengthFinishReasonError: response finished with length reason. You do not get a 400 for this one — the request succeeds, the response is truncated, and Pydantic raises a parse error on the incomplete JSON. The root cause is that constrained decoding on GPT-5.4 counts schema scaffolding (keys, brackets, quotes) against max_tokens, and the default of 4,096 is not enough for deeply nested objects. Fix it by raising max_tokens to at least 8,192 for any schema with more than ten fields, or by flattening nested models into a single level:

resp = client.beta.chat.completions.parse(

model="gpt-5.4-2026-04-02",

messages=messages,

response_format=Article,

max_tokens=8192,

)

Before you ship

Run through this checklist against any codebase you are porting from GPT-5.3 to GPT-5.4. Every item is something you can verify in under a minute, and each one has caught a production regression in at least one public issue on the openai-python tracker.

- Run

python -c "from mymodels import Article; import json; print(json.dumps(Article.model_json_schema(), indent=2))"and grep for any object node missing"additionalProperties": false. If grep finds one, that model needsextra="forbid". - Pin the exact snapshot in your client call — use

model="gpt-5.4-2026-04-02", notmodel="gpt-5.4"— so a future server-side validator update does not silently break your pipeline the day it ships. - Add a unit test that calls

client.beta.chat.completions.parse()against a recorded response (userespxorvcrpy) with the current schema, so CI fails the moment a schema change stops matching. - Set

max_tokensexplicitly on every structured-output call, at a value at least 50% above the worst-case body size your schema can produce, to avoid the truncation failure mode. - Remove any client-side

Article.model_validate(resp.choices[0].message.content)calls that run on already-parsed responses — the API guarantees schema compliance, and the extra validation adds latency with no safety benefit. - Run

pip show openai pydanticand confirm you are onopenai>=1.30.0andpydantic>=2.7; earlier combinations emit a schema shape that the April validator rejects even withextra="forbid"set. - Log the

response_formatschema on first use in every environment, so when a staging-vs-prod failure happens you can diff the actual JSON rather than re-deriving it from source.

The one thing to remember

If you only change one line today, change the model_config on every Pydantic model in your request path to ConfigDict(extra="forbid"), re-run your integration tests against the pinned gpt-5.4-2026-04-02 snapshot, and delete any redundant client-side validation. That single change clears the dominant gpt-5.4 structured outputs pydantic error and forces every remaining bug to surface as a concrete, type-specific failure you can fix with the patches above.

References

- OpenAI Structured Outputs guide — official documentation for the

response_formatfield and the supported JSON Schema subset, including theadditionalPropertiesandrequiredconstraints referenced throughout this article. - Structured Outputs: all fields must be required — the specific section that spells out why

Optional[X] = Noneno longer works and how to express nullability via a type union instead. - openai-python GitHub repository — source for

client.beta.chat.completions.parse()and the issue tracker where the 400 errors described here are reported and triaged. - Pydantic v2 JSON Schema documentation — explains how

model_configoptions likeextra="forbid"flow intomodel_json_schema()output, which is the ground truth for what the OpenAI SDK sends. - Pydantic ConfigDict API reference — the authoritative list of every flag you can set on a model, cited for the

extra="forbid"and schema-generation options used in the fix. - JSON Schema: additionalProperties — the upstream spec for the flag that GPT-5.4’s validator now requires, useful for understanding why server-side validation treats the default as unsafe.