GPT-4o for Coding in 2026: Where It’s the Right Tool and Where It’s Not

If you’re picking an LLM to wire into a coding workflow in 2026, the choice space has gotten more complicated than it was a year ago. GPT-4o is no longer the obvious default — it’s competitive in some categories, behind in others, and the gaps depend more on what you’re doing than on the topline benchmark numbers anyone publishes. This article walks through where GPT-4o still earns its spot in a coding workflow, where the alternatives have caught up or pulled ahead, and what to actually evaluate when you’re picking a model for a specific job.

What GPT-4o is and isn’t

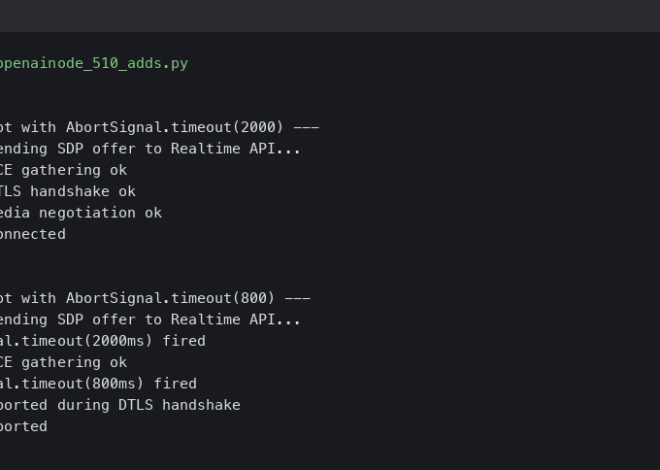

GPT-4o launched in mid-2024 as OpenAI’s flagship multimodal model — text, image, and audio inputs and outputs through a unified architecture. The “o” stands for omni, the marketing pitch was that it could reason about all three modalities together, and the actual coding-relevant behavior was a moderate improvement over GPT-4 Turbo in most categories with a notable speedup at lower cost. For pure code tasks the speedup was the main user-visible win.

Eighteen months later, the picture has shifted. OpenAI shipped GPT-4o updates roughly quarterly that improved instruction-following and reduced hallucination rates on code. They also launched GPT-4.1 specifically targeted at coding workflows. Anthropic shipped Claude 3.5 Sonnet, then 3.7, then the Opus 4 line — all of which are competitive with or ahead of GPT-4o on common coding benchmarks. Google’s Gemini line went through Gemini 2.0 and 2.5 with similar improvements. The gap between the top-tier models has narrowed dramatically.

For day-to-day coding work, the difference between any of the top models is now smaller than the difference between using a model and not using one. Picking between them is more about workflow fit than raw capability.

Where GPT-4o is still strong

The cases where I’d still reach for GPT-4o specifically rather than a competitor:

- Multi-modal debugging. If you’re trying to figure out why a chart looks wrong, why a UI is laid out incorrectly, or what’s happening in a screenshot of an error message, GPT-4o’s vision integration is faster and more reliable than running a separate vision model. You can paste a screenshot directly and ask “what’s wrong with this layout” and the answer is usually relevant.

- Latency-sensitive workflows. GPT-4o-mini is significantly faster than the competing mini-tier models from other labs, and for autocomplete-style use cases (where you want a response in 200ms, not 2 seconds), the latency win matters more than the slight capability gap.

- Function calling with strict JSON schemas. OpenAI’s function calling and structured output APIs are the most mature in the market. If you’re building a tool-use system where the model needs to output JSON that conforms to a specific schema, GPT-4o’s structured output mode will fail less often than equivalent attempts on other models.

- Embedded in OpenAI-native ecosystems. If your stack already uses OpenAI for embeddings, fine-tuning, or other services, the consistency of staying in one ecosystem is worth real money in operational complexity.

Where competitors have pulled ahead

The cases where I’d reach for something else first:

- Long-context code understanding. Anthropic’s Claude line has been notably better at reasoning over large codebases. The 200K and 1M token context windows on Claude Sonnet and Opus models are usable in practice in a way GPT-4o’s nominally-large context isn’t always. “Read this 80,000-line codebase and tell me where the auth logic is” gets a more accurate answer from Claude than from GPT-4o.

- Complex refactoring tasks. When the work requires understanding the relationships between multiple files and producing edits that are consistent across all of them, Claude 3.7+ and Claude Opus 4.x both score noticeably higher than GPT-4o on the public benchmarks (SWE-bench Verified, LiveBench coding) and the gap is visible in real use too.

- Mathematical or formal reasoning embedded in code. DeepSeek’s reasoning models, OpenAI’s o1 line, and the Gemini 2.5 thinking variants all do better on “figure out the algorithm before you write the code” than GPT-4o. If your task involves proving correctness or working out a non-trivial algorithm, the dedicated reasoning models are worth the wait.

- Code in less common languages. GPT-4o is very strong on Python, JavaScript/TypeScript, and Java — the languages that dominate its training mix. For Rust, Zig, OCaml, Elixir, and similar lower-volume languages, Claude tends to produce more idiomatic code. Not always, but consistently enough to be worth checking.

The benchmark situation

Publicly available coding benchmarks are a mess and you should treat them as a rough signal rather than ground truth. The two issues:

- Test set contamination. The most-cited benchmarks (HumanEval, MBPP, the original LeetCode-style sets) have been around long enough that every major model has likely seen the answers during training, either directly or through derivative datasets. Topline scores on these benchmarks are measuring memorization as much as capability.

- Selection bias toward solvable problems. Most benchmark coding tasks are short, self-contained, and have a clear pass/fail signal. Real coding work is none of those things. A model that’s great at LeetCode-style problems can be merely okay at the messy, multi-file, half-specified tasks that fill a real engineer’s day.

The benchmarks I’d actually pay attention to in 2026:

- SWE-bench Verified — real GitHub issues from real open-source repos, scored on whether the model’s patch passes the test suite. The closest thing to a real-world signal in the public benchmark space.

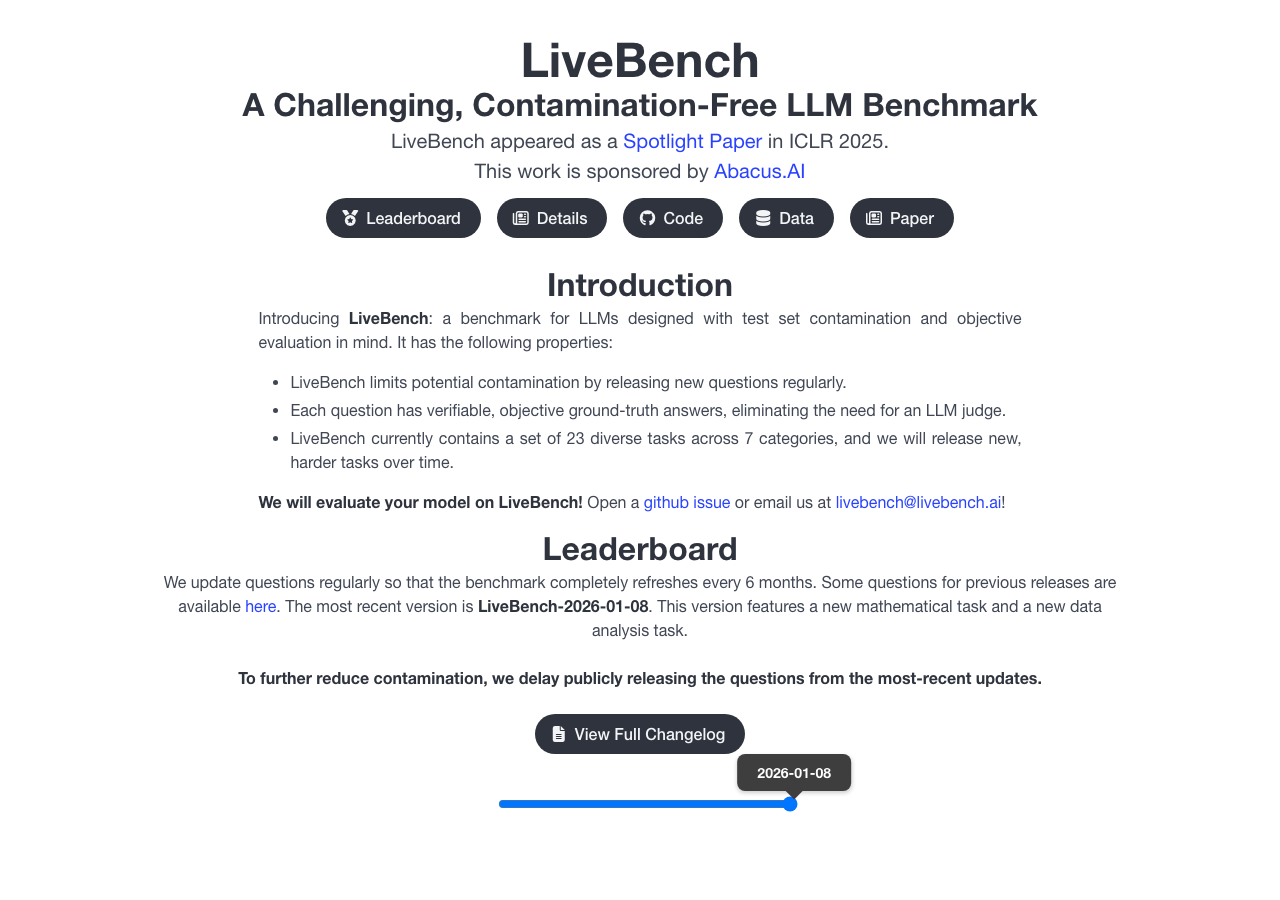

- LiveBench — refreshes its question set monthly, which limits training contamination. Has a coding subset that’s stable enough to compare models month over month.

- Aider’s leaderboard — tests models on real edits to real codebases through the Aider tool. Closer to what actual coding-assistant use looks like.

Avoid: any benchmark from before 2024, anything with the word “comprehensive” in the name, anything self-reported by a model lab without independent verification.

The cost picture

Model pricing dropped substantially across 2024-2025 and is now in a range where most users won’t notice cost as a constraint for personal use. For high-volume API use it still matters. Current per-million-token prices for the main coding-tier models:

- GPT-4o: $5 input / $20 output (standard tier)

- GPT-4o mini: $0.60 input / $2.40 output

- Claude 3.7 Sonnet: $3 input / $15 output

- Claude Opus 4 (1M): higher tier, used for the hardest tasks

- Gemini 2.5 Pro: similar to GPT-4o, Flash variant much cheaper

For a coding workflow that runs a few hundred prompts per day, even the most expensive option is under $10/month. For a workflow that’s making thousands of API calls per minute through an automated agent, the cost differences become real and you’d optimize by routing easy tasks to the mini tier and hard tasks to the flagship.

How to actually pick

The decision framework I’d use, in priority order:

- Pick two or three candidate models based on the rough fit for your use case (multi-modal, long context, fast latency, reasoning-heavy).

- Take five to ten real tasks from your work. Not benchmark questions — actual code you needed to write or debug last week.

- Run all candidates against all tasks. Note which produced usable output and which didn’t.

- The winner is the one that handled your actual tasks best, not the one with the highest benchmark score.

This is harder than reading a leaderboard but the leaderboard answer is probably wrong for your specific case. Twenty minutes of real testing on real tasks is more valuable than a week of reading benchmark blog posts.

GPT-4o is still a strong default for general coding work in 2026, with particular strengths in multi-modal debugging, low-latency autocomplete, and structured output reliability. For long-context code reasoning, complex refactoring, less common languages, and tasks that require explicit reasoning, the other top-tier models are competitive or ahead. Pick based on your specific workflow, test on real tasks rather than benchmarks, and be willing to switch if the landscape shifts again — which it will, probably twice a year for the foreseeable future.