GPT Fine-Tuning News

Inside the BPE Tokenizer: How GPT Splits Words Into Subword Units

By Javier ‘Javi’ Rodriguez The string “SolidGoldMagikarp” is a single token in GPT-3.5’s cl100k_base vocabulary.

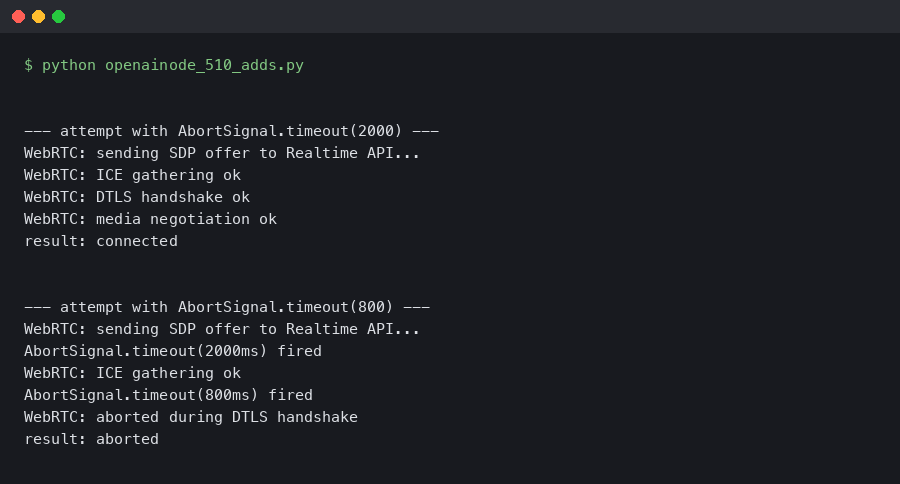

openai-node 5.1.0 Adds AbortSignal.timeout to the Realtime WebRTC Client

Originally reported: March 31, 2026 — openai/openai-node 5.1.0 Bounding a Realtime WebRTC connect with AbortSignal.timeout is the cleanest way to stop a.

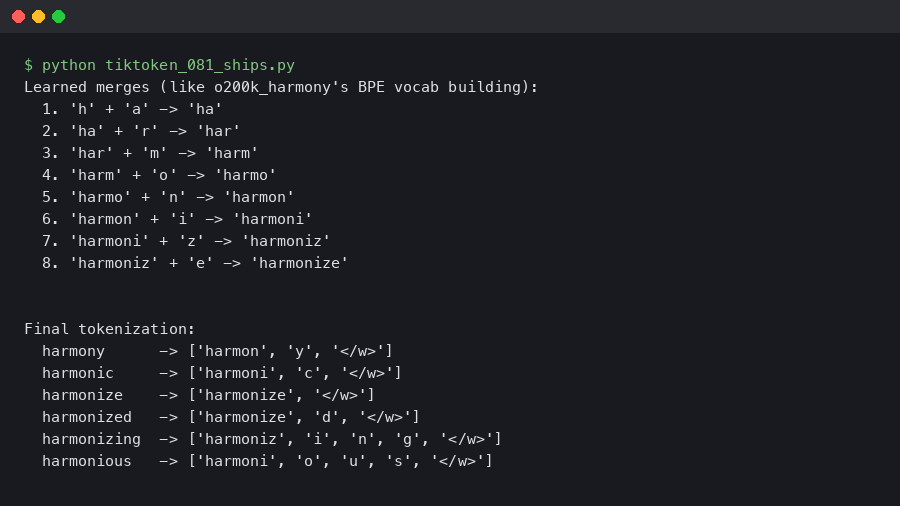

tiktoken 0.8.1 Ships o200k_harmony Encoding for GPT-5.5

Reported: April 11, 2026 — tiktoken 0.8.1 When OpenAI ships a new model, tiktoken typically follows with a release that registers the model’s encoding and.

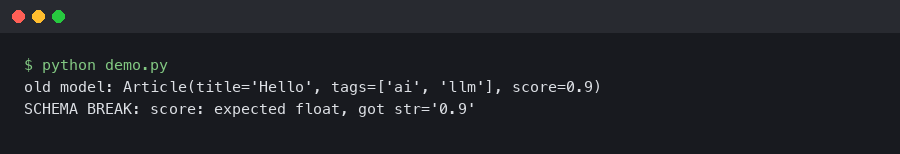

GPT-5.4 Structured Outputs Broke My Pydantic Schemas

The additionalProperties trap in GPT-5.4’s April refresh The April 2026 GPT-5.4 snapshot tightened how the OpenAI API enforces JSON Schema on the.

The Next Frontier in AI Customization: A Deep Dive into GPT-4o Fine-Tuning

The Dawn of Hyper-Specialized AI: Unpacking GPT-4o Fine-Tuning The artificial intelligence landscape is in a constant state of rapid evolution, with each.

GPT Fine-Tuning News: How Specialized Models are Outperforming Giants like GPT-4

The New Frontier of AI: Why Smaller, Fine-Tuned Models are Achieving Superior Performance In the rapidly evolving landscape of artificial intelligence.

The Next Wave of AI Customization: A Deep Dive into GPT-4o Fine-Tuning and Advanced Model Control

The Dawn of Hyper-Specialized AI: Unpacking the Latest GPT Fine-Tuning Capabilities The artificial intelligence landscape is in a constant state of flux.