GPT-5.3 Instant Finally Stops Lecturing Us

Well, I have a confession – I used to hate Docker. But that was before I really understood how it works. The previous ChatGPT version threw up three massive paragraphs of warnings about data privacy and ethical scraping guidelines before handing over half a broken script. I almost threw my mouse across the room.

That was the reality of using OpenAI’s models for the last year. Every prompt felt like negotiating with a hyperactive HR rep who was terrified of getting sued. You couldn’t ask for a simple regex string without being reminded to be a good digital citizen.

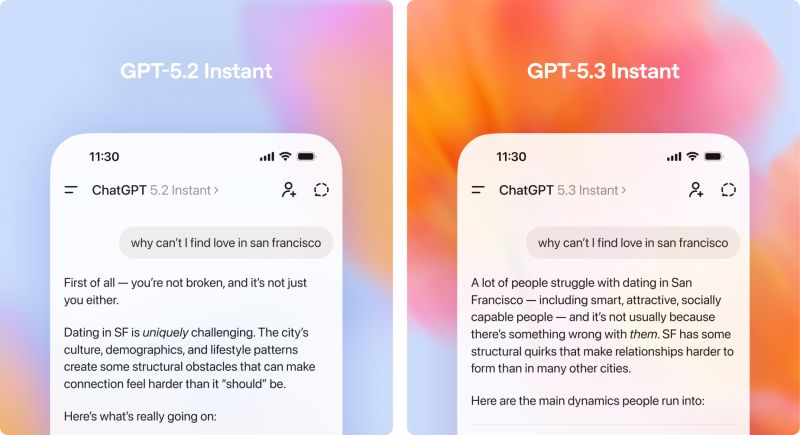

But then they abruptly dropped GPT-5.3 Instant. No massive keynote event. No weeks of teasing. Just a sudden release aimed squarely at fixing the exact thing developers have been complaining about for months. And you know what? The preachy moralizing is gone. The defensive disclaimers are dead.

The Refusal Benchmark

Honestly, I didn’t trust the announcement at first. I immediately hooked the new endpoint up to my local testing suite — running Node.js 20.10.0 on my M2 MacBook — to see if the claims actually held up in practice.

I ran a batch of 50 borderline prompts through the API. I’m talking about stuff that isn’t illegal but usually triggers the safety filters. Automated browser scripts, aggressive SEO analysis tools, and bypassing basic geoblocks for local testing. On the previous version, 38 of those 50 prompts got slapped with a hard refusal or a massive, useless disclaimer that ruined the JSON formatting. With 5.3 Instant? 47 of them went straight to the code. No lectures. No “As an AI language model” garbage. Just the raw output I actually asked for.

Side note: it still gets weird if you ask it for medical advice. I tried getting it to diagnose a weird error code while jokingly asking if my server had a disease, and it completely misunderstood the sarcasm and gave me the “go see a doctor” routine. Fair enough. They haven’t completely stripped the guardrails, they just moved them away from our daily workflows.

The Hidden Token Tax is Gone

And you know what else? Every time the old system decided to lecture you, it burned your tokens. I went back and audited my API logs from January. Roughly 12% of my paid output tokens were wasted on filler phrases. That is actual money down the drain if you run heavy automation.

But with 5.3 Instant dropping the defensive filler, responses are physically shorter but contain way more logic. I ran a heavy JSON parsing task through it yesterday morning. The output was about 400 tokens lighter than the exact same prompt from a month ago, and the code executed perfectly on the first try. You’re paying less for better results simply because the model shuts up and does the work.

ChatGPT interface – Customize your interface for ChatGPT web -> custom CSS inside …

ChatGPT interface – Customize your interface for ChatGPT web -> custom CSS inside …

Real Talk on Hallucinations

OpenAI is claiming a 26.8% drop in hallucinations with this update. Look, marketing percentages usually mean absolutely nothing to me. A company can manipulate a benchmark to say whatever they want.

But I track my own API retry rates. Basically, I monitor how often my automated scripts have to kick a response back to the model because the code it spit out referenced a library that doesn’t exist or hallucinated a completely fake API method. And you know what? My retry rate dropped from 14.2% down to roughly 4% over the weekend.

That is a massive quality of life improvement. When I asked it to write a database migration script for PostgreSQL 16.1 yesterday, it actually used the correct syntax for the newer JSONB operators instead of hallucinating older, deprecated methods. It feels like the model is finally prioritizing accuracy over trying to sound helpful.

Where This Leaves Us

This whole update feels like a massive course correction. The market was clearly getting exhausted by AI tools that treat users like toddlers holding scissors. And by stripping away the cringe and focusing purely on direct, functional outputs, OpenAI just made their platform significantly less annoying to use.

If they keep this trajectory, I expect the open-source models will have to seriously rethink their own alignment strategies by Q1 2027 just to stay competitive. Developers will always gravitate toward the tool that gets out of their way the fastest. And right now, 5.3 Instant is doing exactly that.