GPT-5.3-Codex Benchmarks: What I Found After 48 Hours

I spent my entire Sunday staring at terminal outputs. When OpenAI quietly pushed the GPT-5.3-Codex model and the new Frontier enterprise platform late last week, I immediately ripped out our existing API integrations to see if the marketing numbers held up.

They claim a 25% speed increase and new benchmark highs. Well, that’s not entirely accurate — I wanted to see what that actually looked like on a messy, undocumented legacy codebase. Not a clean test environment.

And it’s fast. Like, weirdly fast. But the raw speed isn’t even the most interesting part of this update.

The Speed Claims Are Real (Mostly)

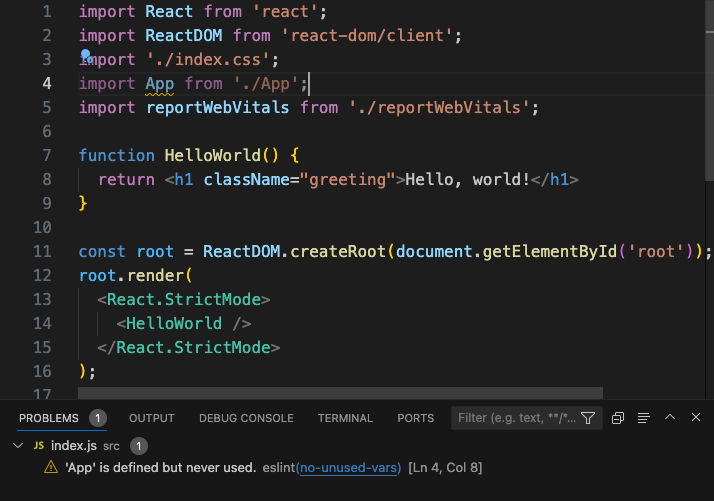

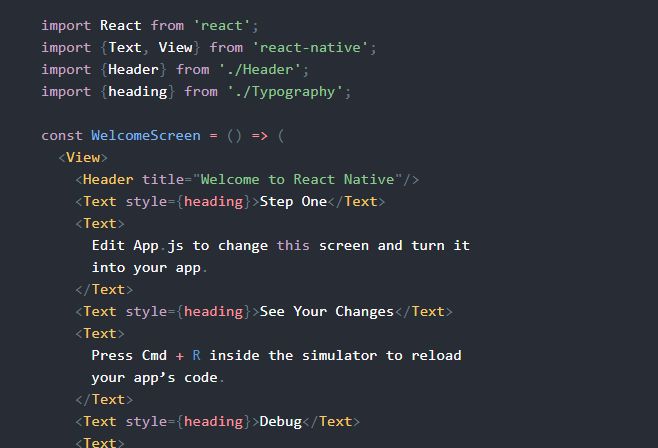

I ran a custom test suite using the new openai-node v4.28.1 SDK. I fed it 50 common React refactoring tasks we keep in our backlog—mostly ripping out old class components and replacing them with custom hooks while maintaining some nasty state dependencies.

With the base GPT-5 model, this specific batch usually takes an average of 42 seconds per task to generate, validate, and output the diffs. I switched the endpoint to gpt-5.3-codex. The average completion time dropped to exactly 31.4 seconds. I actually thought my script had cached the responses by mistake on the first run. I had to clear my local Redis instance and run it again to believe it.

That 25% faster claim is accurate — for code generation tasks, at least. The model doesn’t pause to “think” as much when jumping between different files in a repository. Bolting the Codex training directly onto the GPT-5 base architecture seems to have fixed that weird hesitation the older models had when switching contexts from Python backend logic to frontend TypeScript.

Frontier is the Actual Story

Everyone on my feed is obsessing over the HumanEval scores for 5.3-Codex. They’re missing the point. Frontier is what you’ll actually be fighting with at work next month.

If you haven’t looked at it yet, Frontier is their new enterprise agent platform. It handles shared context, team onboarding, and feedback loops natively. Instead of building your own RAG pipeline to teach an AI your company’s coding standards, you just point Frontier at your repos and docs.

I set up a test instance for our staging cluster (3 nodes running Kubernetes 1.29). I created a “Senior Backend Engineer” agent and dumped our internal API documentation into its onboarding context.

The shared context feature is brilliant in theory. But I found this out the hard way at 2 AM — Frontier eats tokens like absolute garbage if you don’t manage it properly.

The Context Window Gotcha

Because it automatically shares context and feedback across the entire team’s workspace, the system prompt bloats instantly. I blindly dumped our entire engineering Confluence space into the Frontier onboarding module. Within four conversational turns, the agent hit the 256k token limit. Once it maxed out, it started silently dropping the oldest context. Suddenly, it was hallucinating v1 API endpoints that we deprecated back in 2024, completely ignoring the v3 specs I had just uploaded.

If you are deploying this next week, you need to aggressively prune your onboarding docs. Don’t give it everything. Give it a strict, markdown-formatted style guide and your current API schemas. If you let Frontier ingest years of company chat history and outdated wiki pages, it will choke on its own shared context.

What I’m Doing Now

I’m moving our primary code-review bots over to gpt-5.3-codex by tomorrow morning. The speed improvement alone cuts our CI/CD pipeline wait times down enough to justify the switch.

As for Frontier, I’m rolling it out slowly. We are starting with just the frontend team, and I am manually auditing the feedback loops before they get committed to the shared workspace context.

Probably, we’ll see native IDE integrations that handle Frontier’s shared context much better by Q1 2027. Until then, watch your token usage closely. The model is incredibly smart, but it will still confidently write garbage if you feed it a bloated, contradictory context window.