GPT-5.3-Codex API is live: My first 48 hours with it

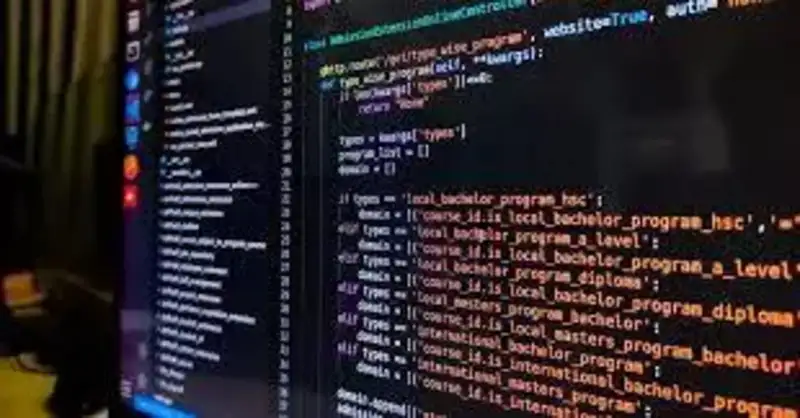

Actually, I should clarify – I spent most of last night ripping out our old code-generation pipeline. OpenAI finally opened up the GPT-5.3-Codex model to third-party developers through their API and Microsoft Foundry. I’ve been waiting for this since the initial whispers late last year, mostly because our current setup was choking on larger repository contexts.

Getting access wasn’t just a matter of flipping a switch. If you’re provisioning this through Microsoft Foundry like I am, there’s a weird quirk with the regional availability that isn’t documented anywhere obvious.

The Foundry Deployment Trap

I tried spinning up the Codex endpoint in my usual West US 3 resource group. I kept getting a cryptic HTTP 429 - InsufficientQuota error. My tier limits were completely fine. I wasted about an hour debugging my IAM roles before I checked the developer Discord.

Turns out, the high-throughput endpoints for 5.3-Codex are stealth-locked to East US and Sweden Central right now. But once I migrated the deployment over to East US, it connected instantly. I’m currently hitting it using the openai-node v4.28.0 SDK, and it’s been stable since the migration.

Real-World Benchmarks (Not the Marketing Fluff)

And everyone wants to know if it’s actually smarter than the 4-series or Claude 3.5 Sonnet. Short answer: yes. But specifically at repository-level refactoring.

I usually ignore the official evals because nobody actually codes like that. Instead, I threw a 45,000-line legacy React codebase at it. The task was highly specific: strip out an old, heavily mutated Redux implementation and replace it with Zustand, while maintaining 100% of the existing test coverage.

The older models would always choke around the seventh or eighth file. They’d lose the context of the global state tree and start hallucinating prop names. But 5.3-Codex processed the entire batch in 84 seconds.

The Context Window Actually Works

The most impressive part isn’t the raw speed. It’s the attention mechanism.

When you dump 100k tokens of messy enterprise code into an API, you expect it to forget the instructions you put at the very top of the system prompt. But I explicitly told Codex to use strict TypeScript interfaces for the new Zustand stores and to avoid using the any type at all costs. And it followed the instruction perfectly across 42 different file modifications.

What About the Audio Endpoints?

They bundled the new native audio models into this Foundry release too. I haven’t hammered these as hard yet since my focus is mostly on the code generation side.

But I did pipe a 40-minute architecture meeting recording through the gpt-5.3-audio-preview endpoint just to see what would happen. It correctly attributed voices, ignored the tangents, and even extracted the exact JSON schema we agreed upon verbally.

Where This Leaves Us

The pricing is aggressive. It’s cheaper than I expected for a flagship coding model, clearly designed to force competitors to drop their API costs. And based on what I’m seeing, I expect we’ll see a massive deprecation of custom fine-tuned coding models by Q1 2027.

I’m migrating our entire internal tooling over to the 5.3-Codex API this weekend. I’ll probably hit some rate limits once the rest of the world wakes up, but the latency improvements are too good to ignore.