What GPT-5.2’s “Compaction” Actually Does to Your Code

Well, I have to admit, the migration to the new GPT-5.2 and Gemini 3 Flash endpoints wasn’t exactly smooth sailing. But hey, at least it works — that’s the important part, right?

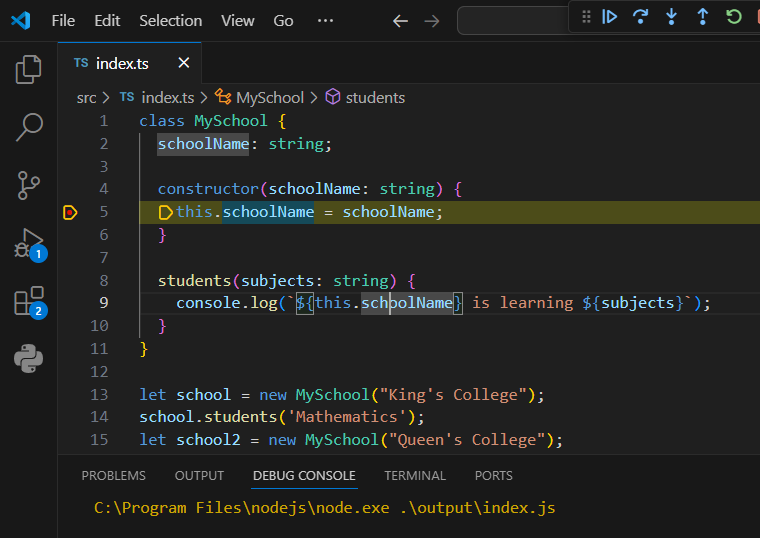

Let’s cut through the marketing hype and talk about what really matters: OpenAI’s “Compaction” architecture and why your terminal is suddenly doing things on its own. I mean, I dumped our entire legacy billing service into the GPT-5.2 API just to see what would happen. 412 TypeScript files, Node.js 22.1.0 — under the old GPT-4.5 endpoints, this would have been a total nightmare. But the new Compaction layer? It worked some kind of magic, dropping the token count from 142k down to 18k. Insane, right?

But here’s the catch — Compaction gets a little too aggressive with those non-standard docstrings. I mean, the previous developer must have hated writing clean code, because we had some weird inline comments. And you know what the Compaction engine did? Just threw them away, to save on tokens. Which led to a fun little null pointer bug that almost had us billing a client three times before the staging tests caught it. Whoops.

And then there’s Google’s Gemini 3 Flash, pushing hard into this whole “agentic terminal” thing. I hooked it up to my Warp terminal, and let me tell you, it’s fast. I watched it diagnose a Docker build issue, rewrite the entrypoint.sh script, and restart the daemon — all in about six seconds. Crazy stuff. But, uh, definitely make sure you sandbox that thing. I accidentally let it loose in a directory with my AWS creds, and my heart almost stopped. Turned out it was just linting my JSON files, but still, not exactly what I had in mind.

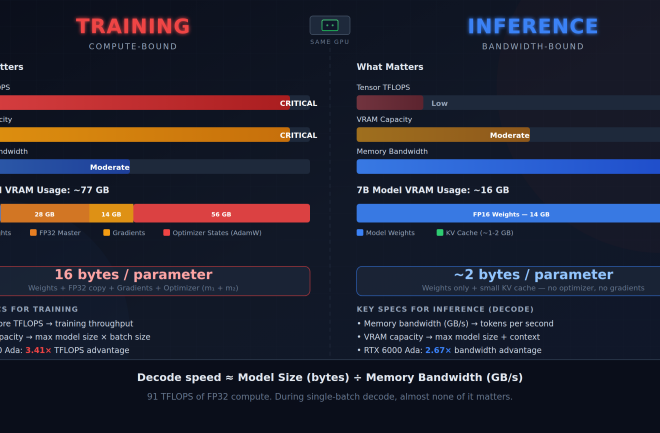

Anyway, the infrastructure is finally starting to catch up to all this AI-powered madness. Kubernetes 1.35 is a lifesaver, handling those massive, unpredictable memory spikes without melting our staging cluster. And the bare-metal Ubuntu 24.04 setup is doing a pretty decent job too, cutting our OOM kills by 80% compared to last month.

So where does that leave us? Well, we’re in a weird transition phase, that’s for sure. These engines are writing code across hundreds of files, and the infrastructure is scrambling to keep up. But you’ve gotta keep a close eye on the whole process. Compaction is brilliant, until it deletes your edge cases. And Gemini? Amazing, until it tries to rm -rf the wrong temp folder.

I’m sticking with GPT-5.2 for the big-picture stuff, like architecture planning and boilerplate generation. But for those complex, messy code refactors, I still prefer good ol’ Claude. Use the right tool for the job, that’s my motto. And for the love of all that is holy, keep your production secrets out of your local environment variables if you’re gonna let Gemini play in your terminal.