AgentKit and GPT-5: The Good, The Bad, and The Infinite Loops

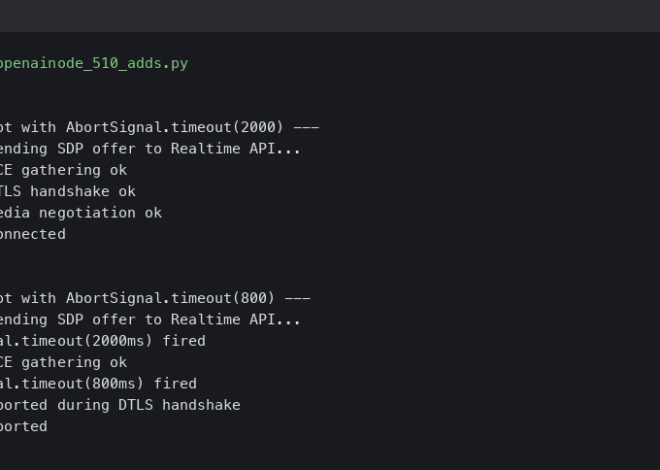

I spent most of last Tuesday fighting with a recursive loop that somehow managed to rack up $14 in API credits in about six minutes. That’s the reality of building autonomous agents in early 2026. We’ve come a long way since the static chatbots of ’24, but anyone telling you this stuff is “plug and play” is lying to your face.

It’s been roughly four months since OpenAI dropped the AgentKit SDK and GPT-5 at Dev Day, and the dust is finally settling. I’ve moved three of my production workflows over to this new stack, and I have… opinions. Strong ones.

If you’re still wrapping your generic API calls in a massive while(true) loop and calling it an “agent,” well, that’s not entirely accurate. The game has changed. Here’s what’s actually happening in the trenches with the latest GPT agent ecosystem.

The AgentKit Reality Check

When AgentKit launched back in October, the documentation was—let’s be honest—a disaster. But the v1.2 update that pushed last week changed the math for me.

The biggest shift? Explicit state management.

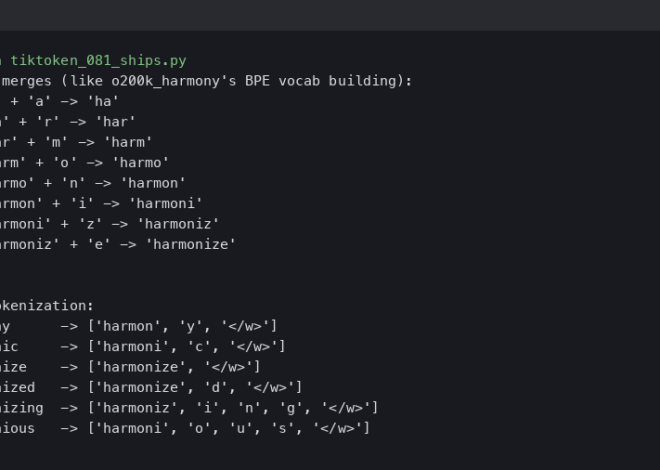

In the old days (aka last year), we had to hack together memory by stuffing JSON into the context window and praying the model didn’t hallucinate a new key. AgentKit v1.2 handles this with a persistent StateObject that actually survives session crashes. I tested this on a long-running research agent that scrapes financial news. It crashed midway through a scrape (my fault, bad regex), but when I spun it back up, it resumed exactly where it left off without me writing a single line of serialization code.

But the Planner is aggressive — and that’s where I ran into trouble.

I was testing the new AutoPlanner module with GPT-5, giving it a simple goal: “Find the cheapest flight to Tokyo and email me.” Instead of just searching Expedia, the agent decided to do a bunch of other stuff. It’s smart. Too smart. You have to constrain these things tightly using the allowed_tools parameter, or they will go off-roading.

GPT-5: Speed vs. Cost

Let’s talk numbers because the marketing slides are always garbage. My logs from yesterday show:

- GPT-4o (Legacy): ~600ms time-to-first-token.

- GPT-5-turbo: ~240ms time-to-first-token.

That 240ms figure is the difference between an agent feeling “broken” and feeling “live.” However, the cost is real. My January bill was about 30% higher than December, mostly because GPT-5 is more verbose in its reasoning steps. Pro tip: Force the model to be concise in the system prompt. It cut my costs by about 12% last week.

The “Apps” Ecosystem is a Mess (But a Profitable One)

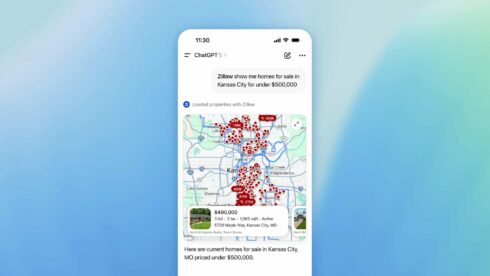

OpenAI’s push for “ChatGPT Apps” has created a weird Gold Rush. I published a simple “SQL Query Optimizer” app in December. It’s doing okay, but the store is flooded with low-effort clones. There are currently 400+ “Personal Shopper” apps. The ones that are winning aren’t the ones with the best prompts; they’re the ones using the new Codex 2025 integration to generate dynamic UI.

Coding with Codex 2025

Speaking of Codex, the 2025 update is… weird. It’s less of a “autocomplete” and more of a “co-developer.” But it introduced a subtle bug. It assumed a dictionary key existed where it didn’t, causing a KeyError in edge cases. It’s confident, but it’s not perfect. You still need to review the code.

What I’m Building Now

Right now, I’m moving away from general-purpose assistants. The real value I’m seeing is in micro-agents.

- Agent A: Monitors GitHub issues.

- Agent B: summarizes Hacker News.

- Agent C: Checks my server logs for anomalies.

I use a master “Orchestrator” agent to route tasks between them. It’s complex to set up—I spent all weekend debugging a race condition where Agent A and Agent B were trying to write to the same Notion page simultaneously—but it’s robust.

If you’re just starting with this stack today, skip the “Hello World” tutorials. Go straight to the AgentKit MultiAgent examples. That’s where the industry is heading. We aren’t building chatbots anymore; we’re building digital employees. Just make sure you set a budget limit on their API usage, or they might just bankrupt you while trying to order pizza.

Questions readers ask

What’s new in AgentKit v1.2’s state management?

AgentKit v1.2 introduced a persistent StateObject that replaces the old hack of stuffing JSON into the context window. It survives session crashes automatically, so agents can resume exactly where they left off without writing any serialization code. The author verified this on a financial news scraping agent that crashed mid-run due to a bad regex and recovered seamlessly after restart.

How much faster is GPT-5-turbo than GPT-4o for agent responses?

GPT-5-turbo delivers roughly 240ms time-to-first-token compared to about 600ms on legacy GPT-4o, based on the author’s production logs. That speed gap is the practical difference between an agent feeling “broken” versus feeling “live” to users. The tradeoff is cost: GPT-5 is more verbose in its reasoning, pushing the author’s January bill about 30% above December.

How do I stop an AgentKit AutoPlanner from going off-topic?

The AutoPlanner module paired with GPT-5 is aggressive and will expand tasks beyond your intent—when asked to find a cheap Tokyo flight and email it, it started doing unrelated work. The fix is to constrain the agent tightly using the allowed_tools parameter. Without that guardrail, the planner will go off-roading and burn through API credits on tangential actions you never requested.

Why are ChatGPT Apps clones dominating the store?

OpenAI’s ChatGPT Apps store has become a Gold Rush flooded with low-effort clones—there are currently 400+ “Personal Shopper” apps alone. The apps actually winning aren’t those with the best prompts but those leveraging the new Codex 2025 integration to generate dynamic UI. The author published a SQL Query Optimizer app in December that’s doing okay but faces heavy clone competition.